How We Silently Switched Mode’s In-Memory Data Engine to DuckDB To Boost Visual Data Exploration Speed

Michael Albers, Senior Software Engineer

April 5, 2023

NaN minute read

With new user growth, it was time for a new in-memory data engine

Our customers have always told us that nothing delivers faster insights than Mode. Write a query. Get immediate results. Visualize. Share the insight.

Part of the technology responsible for this speed is an in-memory data engine that we call Helix. Helix makes data exploration snappy for the constant stream of Mode users whose work adds up to millions of queries per day, whether they’re using SQL or drag-and-drop tools. In other words, Helix is a set of tools, technologies, and custom software that helps Mode run faster behind the scenes, and it’s primarily powered by an in-memory database.

When Mode first launched Helix in 2019, it was originally powered by VoltDB, an in-memory database implemented in Java. A few years later, however, we had reached the limit of what VoltDB could provide.

Mode’s user base grew by quite a lot, and by last summer, it was time to move to a next-generation database that would continue to provide our customers with quick and reliable results while supporting our next phase of growth. We needed to choose a new in-memory data engine that would not only support more users, but could also power increasingly complex visualizations and more user-defined functions, on larger and more demanding datasets.

The need for speed: How we landed on DuckDB

The replacement database, first and foremost, needed to be fast. Users wouldn't see their SQL query results until data was loaded into our in-memory engine, so it had to support very fast writes, at a scale of hundreds of tables per second at peak. We also relied on the engine to support interactive visual exploration, so it needed to process queries very quickly—ideally, returning complex pivots on millions of records in under a few hundred milliseconds. It also needed to have similar features to VoltDB in order to continue to provide the same abilities and tools our users depend upon. We evaluated a few different options: OmniSci, MemSQL, SQLite, and DuckDB and ultimately decided to choose DuckDB as the replacement database.

The replacement database, first and foremost, needed to be fast.

DuckDB is an in-process OLAP database management system and is written in C++ which makes it incredibly fast. DuckDB provides a number of client APIs, multiple ways to import data, and a wide variety of other features that we intend to leverage in the future. Additionally, the DuckDB team is easy to work with. They have been incredibly helpful with bug fixes and providing information about how to best use their product. Almost from the start we knew we had made a good decision moving to DuckDB.

Did you see MotherDuck’s viral post Big Data is Dead? On Wed. April 19, Mode’s Co-founder and CTO, Benn Stancil, will join MotherDuck’s Founder and CEO, Jordan Tigani, to talk about what analysis looks like in the post-big-data era world of small data. Register here.

Register here

Webinar: MotherDuck and Mode on the End of Big Data

Join our conversation with MotherDuck’s Founder and CEO, Jordan Tigani, and Mode’s CTO and Co-founder, Benn Stancil, on Wed. April 19, about how companies are navigating data-informed decision making using smaller data.

Mapping the transition plan: Switching databases seamlessly for our users

With the replacement database decided, the next step was to change out the database without our users seeing any impact to the Mode experience.

Our goals for this project were modest. We wanted to have a one-for-one swap without any impacts to performance and then, ultimately, have our users experience faster visual data exploration from the power of this new technology.

Changing out such a fundamental piece of an architecture is not a trivial change. The in-memory database is a critical component of generating the visualizations that Mode users rely upon daily. Accomplishing the switch with zero downtime and zero negative impacts would be difficult, but necessary.

The plan for the transition was relatively simple: run both databases at the same time while gradually adding responsibilities to DuckDB in the background. Then, once DuckDB was production ready, perform a hard cutover.

The first step of this plan was straightforward. We needed to refactor the Helix code to be able to run VoltDB and DuckDB simultaneously while implementing the mechanism to gradually transition to DuckDB.

The next step would happen in a sequence of phases. Each phase would transparently replicate another part of Mode’s existing workload onto the new DuckDB infrastructure. But, we also needed the ability to pause the transition or potentially rollback to any previous phase at any time. We also needed the ability to measure DuckDB’s quality and performance relative to VoltDB at each phase, so we devised several phases to the rollout.

Phase 1: Ingestion fire & forget

Before we get into the details of this phase, it’s helpful to know more about how Helix works. Our users’ query data is imported into the in-memory database in a process we call ingestion. This involves Helix creating a table in the in-memory database where the data is inserted. As long as the data continues to be accessed, it remains in the database. After a period of inactivity, it is unloaded. It can be reloaded at any point in the future.

During the ingestion fire & forget phase, user data was ingested into both VoltDB and DuckDB. And while the data would go through the full lifecycle of ingestion and unloading in both databases, the data in DuckDB was redundant to our production systems, and not accessed at all during this phase. That way, if there had been any bugs with data ingestion, it wouldn’t create a problem for our customers. The error would be logged (and later investigated) while Helix continued to perform as normal—hence the ‘fire & forget’ part of the name.

During this phase, we measured a few different aspects of DuckDB. First, we compared the data import speed of both databases as identical data was being ingested into each. Second, we monitored the memory usage of DuckDB to make sure it would not grow excessively with the current workload and that it could safely scale up as the workload increased.

Phase 2: Query fire & forget

Once we could reliably ingest data into DuckDB, we began to execute queries against it. The exact same queries—barring any slight syntax differences inherent in using two separate databases—were executed by VoltDB and DuckDB. Again, only the results from VoltDB were returned to the user. In order to safeguard against any unforeseen problems with DuckDB, all queries run against it were run asynchronously; if DuckDB happened to be too slow or if there were errors in those queries, the user experience was not impacted.

In this phase, we were able to measure more parts of DuckDB. Our main concern was the query speeds between VoltDB and DuckDB. The speed needed to be the same, or better, to meet existing performance standards. This phase also gave us a chance to thoroughly test our DuckDB query generation code in production. This was possible since the DuckDB queries were completely transparent to the user.

Phase 3: Query compare results

The next step was to compare the results from VoltDB against DuckDB. This step allowed us to further refine our query generation code as well as to ensure that DuckDB could replace VoltDB without affecting our users.

In this phase, each and every value returned from VoltDB was compared against each and every value returned by DuckDB for every query. As we quickly learned, this wasn’t as straightforward as we initially thought. The problem wasn’t that the returned data differed between VoltDB and DuckDB. It didn’t. The problem was other nuances we didn’t foresee.

Data ordering was the biggest obstacle to overcome. Without an ORDER BY clause in the query, each row returned by the databases didn’t necessarily correspond to each other. We solved for this by annotating the comparison data with a flag that indicated if the results came from explicitly ordered queries. However, even with an ORDER BY clause, other problems arose. For one, VoltDB and DuckDB have differing opinions on where NULL values should go, first or last, so we had to correct for this to make sure the user experience remained the same with DuckDB.

These and similar small issues all made query comparisons more difficult and time-consuming than originally anticipated. Our customers rely on Mode to make critical decisions, and the worst mistake we could make would have been a computational error that returned incorrect results. As time-consuming as this process was, it was necessary.

Phase 4: Using DuckDB exclusively

This was the final phase of the transition. We only moved to this phase once we were 100% satisfied with DuckDB’s performance from the previous phases. During this phase, we flipped the switch: DuckDB served all user requests, bypassing VoltDB completely. But, even during this phase we could still revert to VoltDB if an issue was discovered.

Other tools that helped along the way: an observability platform and feature flags

At Mode, we’re avid users of feature flags. We use LaunchDarkly to manage our flags; it’s a great product with many different kinds of flags to practically suit any need you may have.

The rollout phases were defined by variations on a single LaunchDarkly feature flag. A change to this flag would allow us to move our entire system between phases without deploying a new version of our software. Using feature flags also made it possible for us to revert back to the voltDB infrastructure immediately if DuckDB's performance faltered.

An important feature of LaunchDarkly that was heavily used during the DuckDB rollout was its ability to have a percentage rollout, keyed on user defined values. This feature allowed us to roll out DuckDB usage by customer, user dataset, or by machine running Helix. Using this feature, we could isolate DuckDB usage in different configurations to verify functionality and assess impacts. It also allowed us to gradually scale up DuckDB usage to make sure it could support the workload demands.

Throughout the rollout process we relied almost exclusively on Honeycomb to measure DuckDB performance. We also love Honeycomb at Mode. Their observability platform for Open Telemetry traces and custom events is a critical component in Mode day-to-day operations. And it was essential for the DuckDB transition.

With Open Telemetry and Honeycomb, the Helix team could directly compare data ingestion and query times between VoltDB and DuckDB. We also utilized their custom event ability to handle the data comparison; each field which failed comparison created a new event which was sent to Honeycomb.

The results: Mode is faster with DuckDB

In transitioning to DuckDB, we wanted Mode’s visual data exploration capabilities to be faster, even with the addition of new users—and it is. Our broadest goal in transitioning was to be no worse than VoltDB, if not better. And, unofficially, the motto was “if no one notices we did this, it’s a success.”

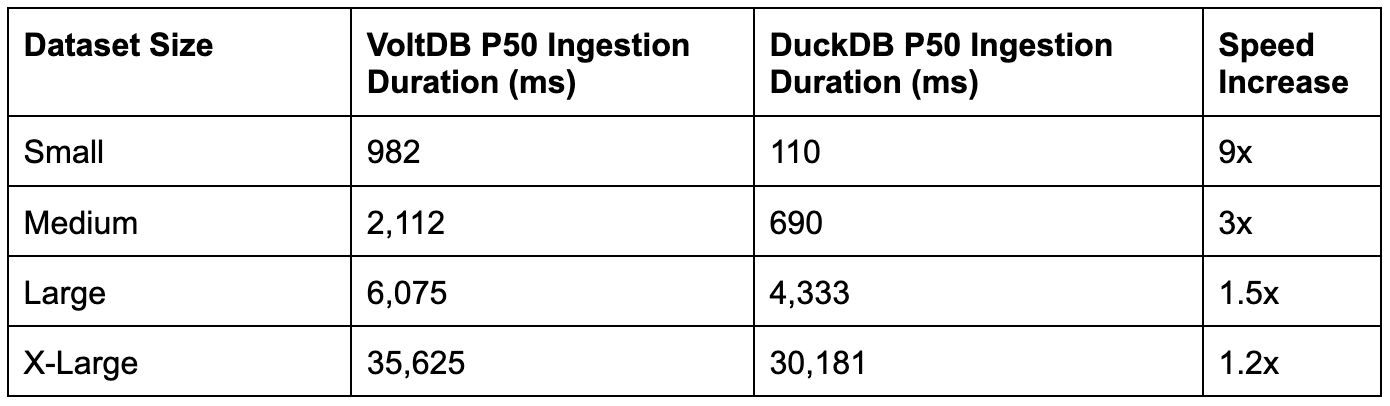

Technically, we had more specific goals. We wanted DuckDB's performance to be within a certain percentage of VoltDB's, in both ingestion times and query times. During initial usage during development we found out that DuckDB was probably going to provide substantial performance gains, and this in fact, ended up being the case in production.

The bottom line is that everything is faster with DuckDB.

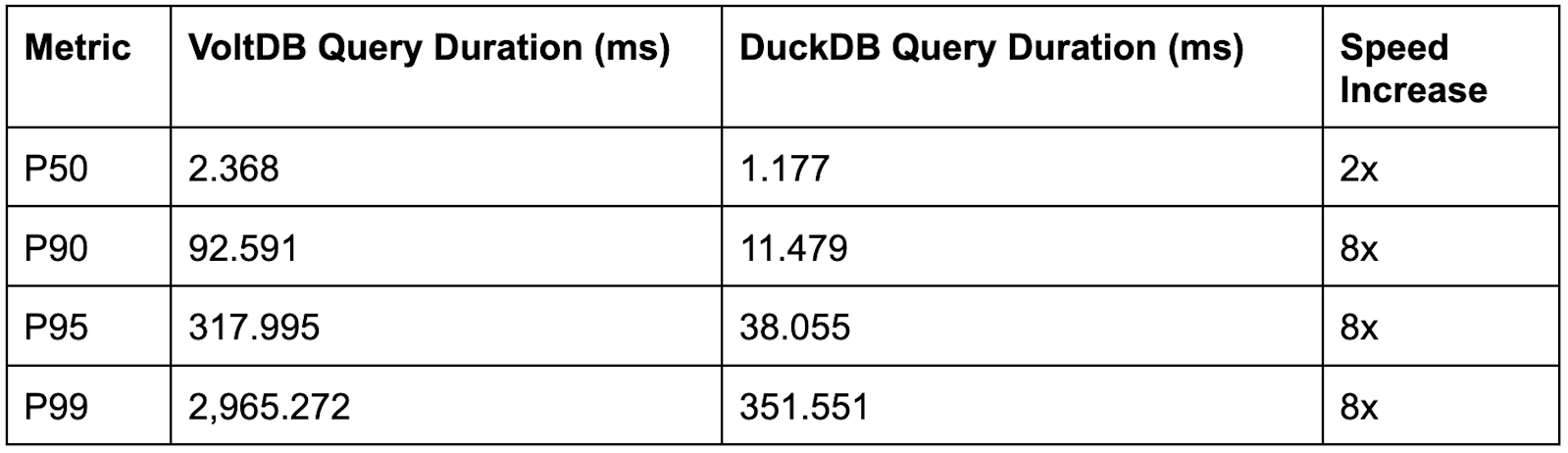

As mentioned earlier, Honeycomb observability tools were key in assessing the performance of DuckDB relative to VoltDB. The two tables below are built from Honeycomb data and show that data ingestion times dropped across the board in DuckDB. (The sizes are a coarse bucketing method which groups the size of a user’s query result based on the number of rows in the result.)

As did query times, quite substantially.

What exactly do these numbers mean for someone using Mode?

First, query results are more quickly returned to the user after their query completes.

Second, while visualizing their data in Mode’s Visual Explorer, changes are processed and displayed significantly faster when using DuckDB than with VoltDB.

This is important, because when users do ad hoc analysis in Mode—a process that could look like creating a dataset with iterative SQL and inspecting it visually from tons of different angles— every millisecond counts. Delivering speed is where DuckDB shines.

A future of speed with DuckDB for faster BI

Thanks to DuckDB, we’ve continued to find even more ways to improve performance gains. DuckDB has also given us the opportunity to make other internal improvements to the Mode system which have helped our platform run more efficiently.

DuckDB itself continues to improve as well. The team has improved reliability, resource usage, and performance over the last few releases. Early on, even before the transition was complete, Mode fully became a Gold member in the DuckDB Foundation to invest in the safeguarding of long-term maintenance of DuckDB. This speaks to how much we believe in this product.

With DuckDB, Mode’s platform performance is faster, there are fewer errors, maintenance is easier, deployments are faster, and new feature development has been simplified—which ultimately means faster turnaround time on features for our customers. In sum, everything in Mode has become better with DuckDB and we can’t wait to see that continue for Mode’s future.

We'd like to acknowledge the engineers who worked on this project: Ryan Kennedy, Talia Trilling, Kiran Pandi, Kevin Gay, Amir Rajan, Divya Ranganathan Gud, John Wes Rodgers Jr., and Nascif Abousalh-Neto.

MotherDuck and Mode on the End of Big Data

Join our conversation with MotherDuck’s Founder and CEO, Jordan Tigani, and Mode’s CTO and Co-founder, Benn Stancil, on Wed. April 19, about how companies are navigating data-informed decision making using smaller data

Get our weekly data newsletter

Work-related distractions for data enthusiasts.